When working with analog signal glitching, the resulting output is too corrupt for digital devices to understand. They will most often blank out and say lost signal, especially when the glitching becomes too extreme. In order to correct this, you need to use a device called a Time Base Corrector. This video on Youtube does a good job at explaining TBC and gives examples of some of the more popular ones.

Based on his recommendations, I was able to snag a Sima SFX9 off eBay. They’re rather hard to come by now, so I was happy to find one in working condition.

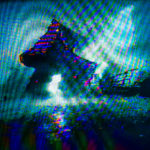

The SFX9 has some pretty cool features for video mixing. I got it setup going into my CRT first just to make sure it worked. Using the two channels, I created a loop for the glitching so that the dry signal went into Video1 and then used that to make the wet signal in Video2. This allows me to mix, wipe, and key the signal as an overlay.

I didn’t capture my initial tests but I was very happy with the results. The SFX9 has no apparent delay on the effects and it handles the glitching wonderfully. However, the resulting output is different from when I go straight into the CRT from my circuit bent equipment.

Even with the differences, the ability to use the setup on digital devices such as projectors and digital capture cards, I’m happy with the trade off. This mixer gets me one step closer to performing live visuals.